This post summarizes the main ideas of baobab’s presentation during the first Gurobi Industry Day dedicated to Telecom Industry.

When I was told to do a speech about the application of prescriptive analytics to the Telecom Industry, I thought I should start from the core concept of what prescriptive analytics is. Based on it, I was going to discuss if the expected evolution of telecom networks and services will require or not these kinds of analytical technologies.

Going to the basics, prescriptive analytics is about optimal decision-making. It is about going beyond the pure predictions of most advanced analytics techniques and enabling the selection of the best options in your day-to-day decisions as a business manager.

Optimality in your decision making is always connected to a scarce resource. In other words, something is only relevant to your business if it is scarce, nothing is really a key component if there is full availability of it. Think about it in your own case: equipment, human resources, budget, space in a store, time to deliver a product or service…

The Network is the Key Resource of a Telecom Company

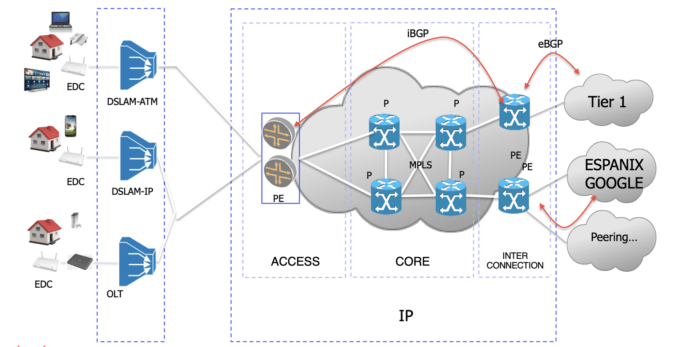

Instead of detailing every scarce resource, a telecom company requires to do business, and the kind of decisions that will be good candidates for optimization, I’d rather focus on the key asset for a telecom company: its own network. And following this approach, it seems a sensible starting point (and a perfect excuse) to include a picture from my classes of network design at the university. In the figure, you’ll find some of the key protocols and techniques of state-of-the-art network designs we usually teach in our classes.

We teach our students BGP, the protocol that governs the relationship between networks (ASs, Autonomous Systems, if we follow the common way of designating the different administrative domains within the Internet). Using BGP, an AS discovers the available network destinations, offers theirs to others and selects the best options based on business criteria. All in all, BGP determines the network demand and the interdomain flows our network will have to cope with.

Also depicted in the figure there is a representation of a topological paradigm: the Hierarchical Architecture. Routers within the IP Core Network are deployed in different layers, interconnections are established to avoid single points of failures within that architecture.

MPLS is the third ingredient I want to pay attention to. It is a protocol that eases traffic engineering. Using MPLS you can distribute flows in a way not strictly attached to the shortest paths, or even split a flow into two paths to make more efficient use of your infrastructure.

Students are also taught about how to re-route traffic in case of failures; how to calculate the required capacity for every connection, considering eventual failures and the corresponding rerouting of flows.

The key point is if network planning is a good scenario for optimization technologies. It seems so, there seems to be room for maths to improve this design process. And, simply reviewing the state of the art, networks usually are a typical field for optimization algorithms. Just to give you some examples, please consider these two well-known network problems (taken from Eiji Oki, “Linear Programming and Algorithms for Communication Networks”):

- The maximum flow problem, which calculates the bigger flow that can pass through the network, given a pair of vertices.

- The min-cost flow problem, which looks for the shortest path route that supports a particular flow.

However, talking to some close friends working at telecom companies in Spain, they don’t take full benefit from optimization (and they should).

The reason is that access networks require much more investment than the IP Core, and they don’t want to risk. Therefore, their main recipe to design the IP core is basically to oversize capacity; they make simple calculations -like our students-, based on the mean and peak traffic for each flow, and deploy one more way capacity to be prepared for extraordinary situations (the kind of recipe that proved useful throughout the pandemic and its unexpected increase of demand).

What I’d like to discuss in this blog post is whether this recipe is able to work as a stable solution for the new generation networks. We will try to argue against this assertion and put our bet on the idea that optimization and future telecom networks will align their paths in the short term.

5G is a Huge Promise

Definitely, the future of telecom networks is 5G, but the leap set by the standards is so big that this future is going to come to reality little by little. I just will try to reach some conclusions based on what 5G is outlining for that future, but you can delve into the main arguments by reading the magnificent Rath Vannithamby & Anthony Soong’s “5G Verticals”.

Firstly, we have to say that 5G is a huge promise: I call it a huge promise because it is pretty obvious that expectations are extraordinarily high: more coverage, huge data rates, extremely low latencies…

At the same time, it’s well known that 5G is the first mobile service generation not strictly related to innovation in the waveform. Of course, there are new proposals for the radio side: densification is not the main solution for improving wireless capacity anymore (in other words, the cell concept starts to fade away), and 5G prescribes multi-connectivity, the ability to meet a device demand by aggregating capacity from different radio connections, even from different stations.

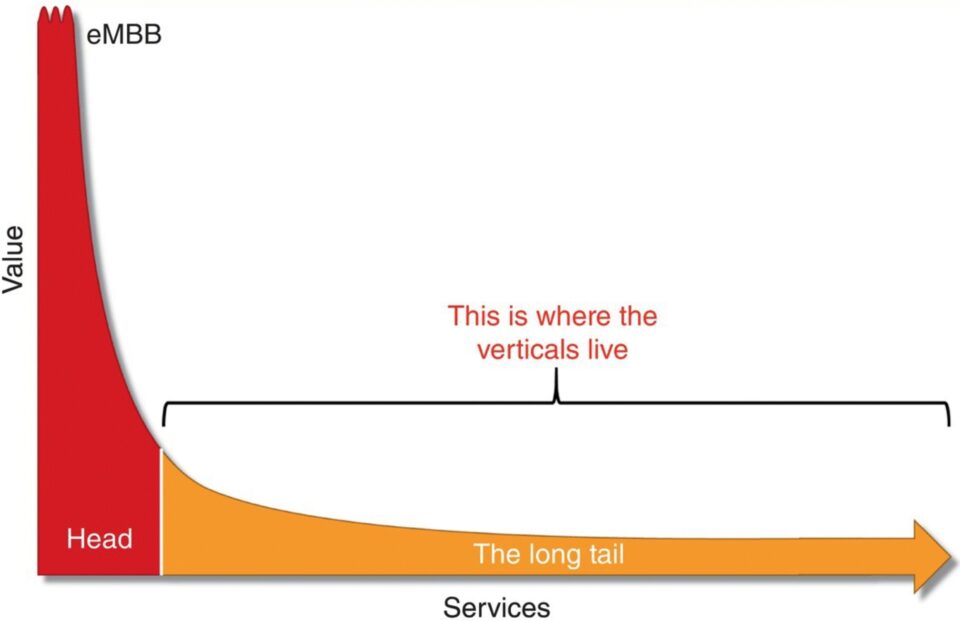

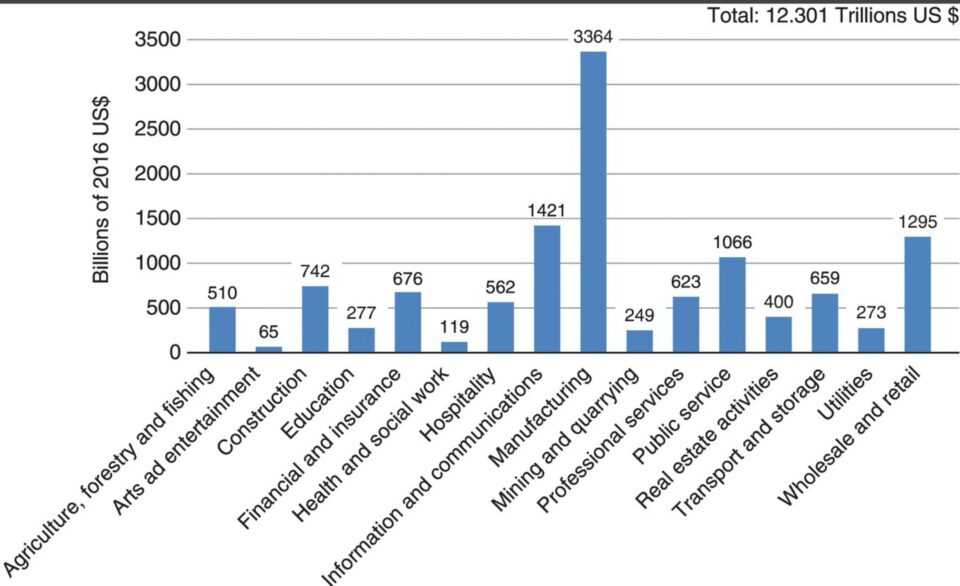

In my opinion, the most innovative direction set by 5G is that there are a huge amount of new users waiting to join the network and enjoy its services: machines. Moreover, providing that we are quite close to having a mobile device per person on earth, these new non-human users will be the best strategy to grow for telecom companies. eMBB (enhanced mobile broadband) is the standard name of the broadband service for humans. As a service, it is going to be a massive one, but 5G also anticipates a massive long tail for new business based on specific verticals (with manufacturing as a key vertical expecting a revolutionary future).

If we review some of the major technical trends that will serve as the basis for making 5G a reality, it will be quite clear that broadly speaking, they will just add flexibility to networks.

- NFV stands for Network Function Virtualization. I mean, the ability to decouple network functions and network physical infrastructure. The technique that will allow us to deploy networking in generic hardware and make it possible to flexibly deploy different network architectures within the same infrastructure.

- SDN (Software Defined Networks) aims to decouple data and control planes, making it possible the programmable networks. The ability to change control plane functions using software because the relationship with the data plane that manages packets is standardized through APIs. Different behaviours will be able to be achieved just by configuring devices using the software.

But the most impressive idea is network slicing.

A network slice is a logical network that provides specific network capabilities and characteristics to a single customer. The key requirement is isolation, any slices can’t be affected by hard conditions in others.

But, in fact, they share the same infrastructure. The need for advanced orchestration is straightforward: We have to allocate resources in a deeply configurable infrastructure to meet very diverse and complex needs, subject to conditions that are dynamic.

Conclusions: Networks & Optimization Will Align

Thinking about the three main ingredients of a good optimization scenario (a resource, a complex environment in which a decision must be made, and a huge impact on business), it is pretty obvious that 5G is a kind-of perfect optimization cocktail we can summarize this way:

- The increase in complexity is due to new services, with strong requirements, that must be supported by a common infrastructure with a new bunch of available alternatives.

- Scarcity is due to the massive demand for bandwidth.

- The impact on future business is obvious, most telecom companies’ growth will depend on the new verticals joining our networks.

To sum up, I would like to outline some key conclusions:

- Regarding telecom networks, there will be more decisions, more complex, and with a higher impact on KPIs in the future foreseen by 5G standards.

- If we need to offer new services to specific verticals (the long tail), the ability to control the common infrastructure will be key to gaining competitiveness by reducing the time-to-market of these new services.

- Some of the current recipes for network design and control will decline: Will it be enough to let network vendors develop generic optimization/orchestration solutions? Will just oversizing capacity be the main strategy to meet user demands?

My bet is they won’t be feasible solutions anymore. Telecom networks and optimization technologies are doomed to converge sometime in the short term.